Affiliate Disclaimer:

Some of the links in this post are affiliate links. This means we may earn a small commission if you click through and make a purchase, at no extra cost to you. We only recommend tools we truly believe offer value.

1.When Was AI Invented?

One day, AI feels like it suddenly appeared everywhere—writing emails, editing photos, answering questions—and we catch ourselves wondering: “Wait… when did all of this actually start?”

If it feels new, you’re not alone. Most of us only noticed AI once tools became fast, visible, and useful in daily life. That’s why searches around when was AI invented keep rising: not just out of curiosity, but because we’re trying to make sense of something that now affects our work, our privacy, and our decisions.

What we often miss is that AI didn’t arrive overnight. It didn’t start with chatbots or image generators. And it definitely didn’t begin in the last few years. The history of artificial intelligence stretches back decades, following a long and often misunderstood AI timeline shaped by trial, failure, and renewed breakthroughs.

In this article, we’ll walk through the real moments that shaped modern AI, without technical overload or hype. We’ll clarify:

when was AI actually invented,

why it took decades to become useful,

and how early AI research still influences the tools we use today.

Understanding this timeline—and the evolution of AI behind it—helps us use modern systems more realistically, and avoid expecting them to do things they were never designed to do.

Recommended Reading

If you want a deeper, clearer understanding of how AI evolved over time and why it works the way it does today, Artificial Intelligence: A Guide for Thinking Humans by Melanie Mitchell is one of the most balanced and accessible reads available. It connects early AI ideas to modern machine learning and generative tools — without hype or technical overload.

Good to know: at the moment, the audiobook version is often available for free through Amazon Audible trials, making it an easy way to explore the topic while commuting or working.

2. Breakthroughs #1–#2: Where Artificial Intelligence Really Began

When we look back, AI didn’t begin as a product—it began as a question. Researchers weren’t trying to build assistants or apps. They were asking a simpler, deeper thing: can a machine think, or at least behave as if it does?

That question led to the first two breakthroughs that still define AI today.

| Breakthrough | What happened | Why it matters |

|---|---|---|

| 1. Dartmouth Conference (1956) | Researchers officially introduced the term “artificial intelligence” | It defined AI as a field focused on reasoning, not just automation |

| 2. Early Symbolic AI | Computers followed predefined rules to mimic human logic | This shaped expectations that AI should “think” like humans |

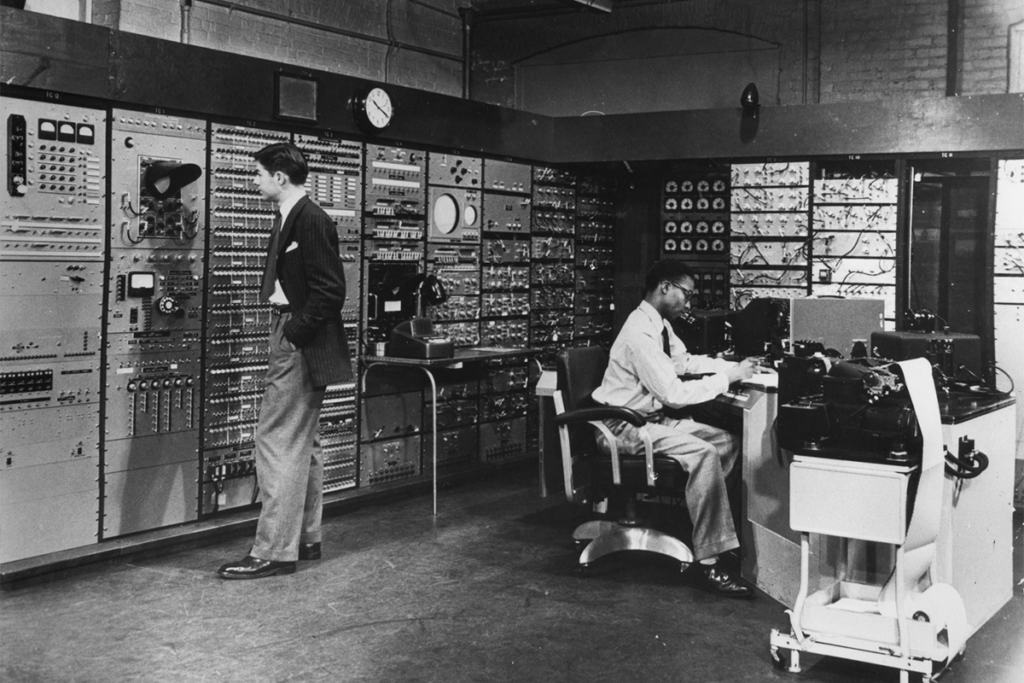

At the Dartmouth Conference, a small group of scientists believed that human intelligence could be described with rules—and therefore replicated by machines. This moment is widely considered the starting point in the history of artificial intelligence, and it’s where many researchers trace back when AI was invented as a formal field. It was ambitious, optimistic, and, in hindsight, a little naïve. But it gave AI its first real identity.

Soon after, early systems tried to “think” by following strict logic: if this happens, do that. These programs didn’t learn. They didn’t adapt. Yet, during this early phase of AI research, machines could already solve puzzles, play games, and follow reasoning paths that looked human from the outside—at least within very controlled environments.

This matters because many expectations—and misunderstandings—about AI were born at this point in the AI timeline. Even today, we often assume AI understands things the way humans do. In reality, that assumption comes directly from these early symbolic ideas, not from how modern systems actually work.

And as we’ll see next, this growing gap between ambition and reality is exactly why the early evolution of AI would soon hit its first major wall.

3. Breakthrough #3: Why AI Failed (and Almost Disappeared)

At some point, the excitement collapsed.

After the early breakthroughs in the history of artificial intelligence, expectations around AI grew much faster than reality. Researchers, governments, and companies believed machines would soon understand language, reason like humans, and solve complex problems. That didn’t happen—and the AI timeline quickly took a very different turn.

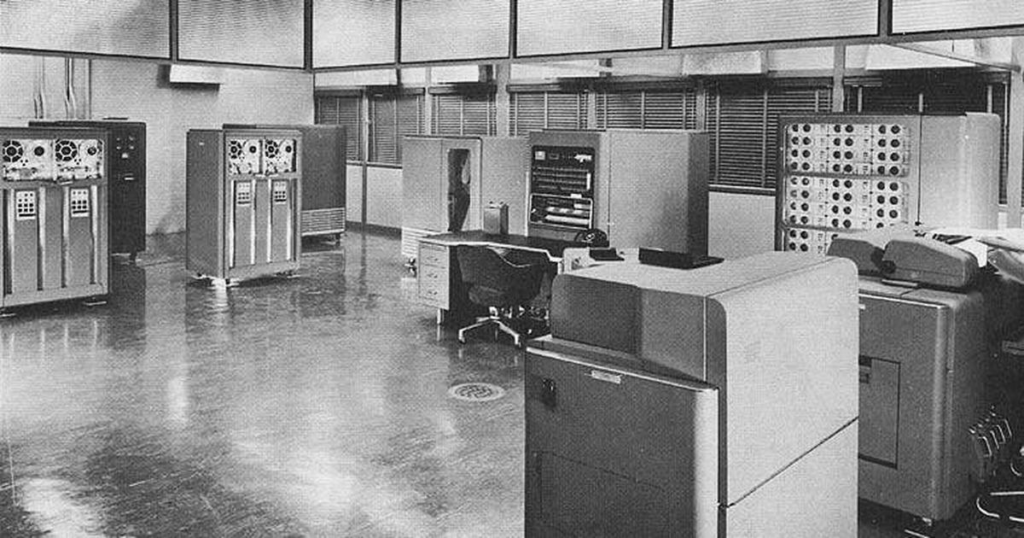

What followed is what we now call AI winters: long periods where funding dried up, public interest faded, and progress slowed almost to a stop. These phases marked a critical moment in the early evolution of AI, showing how far reality still was from the original vision behind when AI was invented.

The core problem was simple: early AI systems were too rigid. They relied on hand-written rules and logic created by humans. As soon as the real world became messy, unpredictable, or ambiguous, those systems broke down. They couldn’t learn from data, adapt to new situations, or improve on their own—limitations that defined much of early AI research.

This growing gap between promises and results led to widespread disappointment. Many concluded that AI had been overhyped—or worse, that the original ambition behind when AI was invented wasn’t realistically achievable at all.

If you want an authoritative overview of this phase, the history is well documented by academic and institutional sources such as IBM’s overview of AI history and AI winters.

Why does this matter today? Because the memory of those failures still shapes how modern AI is built. It’s one of the main reasons today’s systems focus on learning from data rather than trying to “think” through fixed rules.

And it sets the stage for the next turning point—when AI finally started working because the approach changed, not because computers became magically smarter.

4. Breakthrough #4–#5: How Modern AI Finally Took Off

After years of false starts, something fundamental changed. AI didn’t suddenly become “smarter”—the way we built it changed.

Instead of telling machines how to think, researchers began letting them learn from data. This shift unlocked the two breakthroughs that finally pushed AI forward.

| Breakthrough | What changed | Why it worked this time |

|---|---|---|

| 4. Machine Learning | Algorithms learned patterns directly from large datasets | Systems improved with experience instead of fixed rules |

| 5. Deep Learning & Scale | Neural networks, more data, and stronger computing power | Accuracy jumped once models could process real-world complexity |

This is the moment where AI stopped being fragile. In the evolution of AI, machine learning marked a clear turning point: systems could finally adapt instead of relying on fixed rules. Deep learning then made it possible to handle images, speech, and language at scale. More data meant better results, and more computing power meant faster improvement—reshaping the later stages of the AI timeline.

It’s also why AI feels so different today. Modern tools don’t follow rigid instructions anymore. They predict, adjust, and refine their output based on patterns they’ve seen before, learned through years of large-scale training. This shift explains why assistants, translators, and image generators suddenly became usable in everyday life, long after when AI was invented as a research field.

Understanding this change helps us see both sides of modern AI. It shows why today’s systems are powerful—but also why they can still fail in unexpected ways. In the next section, we’ll connect these breakthroughs to the tools we use every day, and explain what they can (and can’t) realistically do, based on the full history of artificial intelligence.

5. What These Breakthroughs Explain About Today’s AI Tools

At this point, a lot of things finally click.

Modern AI tools don’t work because they “understand” us. They work because decades of breakthroughs taught machines how to recognize patterns at scale—in text, images, voices, and behavior.

That’s why today’s AI feels impressive and unpredictable at the same time.

| What AI does well today | Why it works | What to keep in mind |

|---|---|---|

| Writing, summarizing, translating | Language models learned from massive text datasets | Confidence doesn’t equal correctness |

| Image and video generation | Deep learning recognizes visual patterns extremely well | Outputs reflect training data, not real-world intent |

| Recommendations and predictions | Machine learning optimizes for likelihood, not meaning | Bias and feedback loops can form |

This explains a behavior many of us notice: AI tools are excellent assistants, but unreliable decision-makers. They predict what looks right based on past data—not what is objectively true or appropriate in every situation.

A clear, authoritative explanation of this limitation comes from Stanford’s Human-Centered AI (HAI) research, which highlights how modern AI systems excel at pattern recognition but lack genuine understanding.

Seen through this lens, today’s AI makes much more sense. It’s powerful because of history—not because it crossed some magic intelligence threshold.

And once we understand why it works the way it does, we can start using it more intentionally, more safely, and with far more realistic expectations.

6. What “AI Was Invented” Really Means Today

So when we ask when was AI invented, what we’re really trying to understand is something deeper: what kind of tool AI actually is, and how it fits into our daily decisions today.

AI wasn’t invented as a single machine or a sudden moment of intelligence. Looking at the history of artificial intelligence, it clearly emerged step by step—through ideas, failures, and breakthroughs that shaped how machines process information, not how they think. This long AI timeline explains why today’s AI can feel incredibly capable in some tasks, and surprisingly limited in others.

Knowing this history helps us reset expectations. It reminds us that AI is best used as a support system, not a replacement for judgment, creativity, or responsibility. When we understand where AI comes from—and how the evolution of AI unfolded—we make better choices about how, and when, to rely on it.

Used well, AI can save time, surface patterns, and reduce friction. Used blindly, it can amplify errors, bias, or false confidence. The difference isn’t the technology itself—it’s how informed we are as users, and how clearly we understand what AI was designed to do since when AI was invented.

FAQ

Q: Who invented artificial intelligence?

A: The term “artificial intelligence” was introduced by John McCarthy in 1956, during the Dartmouth Conference, which is widely considered the official birth of the field.

Q: Why did AI take so long to become useful?

A: Early AI relied on rigid rules and logic. It wasn’t until machine learning, large datasets, and stronger computing power became available that AI could handle real-world complexity.

Q: Is today’s AI fundamentally different from early AI?

A: Yes. Modern AI learns from data instead of following fixed instructions. However, it still doesn’t understand context or meaning the way humans do.

Q: Will AI ever become truly intelligent?

A: That remains an open question. Current AI systems are powerful pattern recognizers, not conscious or self-aware entities. Most experts agree we’re still far from human-like intelligence.

Q: Does understanding AI history actually help me use AI better?

A: Absolutely. It helps set realistic expectations, spot limitations faster, and use AI as a tool—not an authority.

If this guide helped you better understand when AI was invented and why it evolved this way, you may also find these related posts useful: