📅 Published on: July 2, 2025

- What Are Deepfakes and Why They Matter

- How Deepfakes Work – The Tech Behind the Trick

- Why Deepfakes Are Getting Harder to Spot

- How to Protect Yourself from Deepfakes

- The Role of Digital Literacy

- Ethical Risks We Need to Watch

- Real Examples You Should Know About

- What Platforms Are Doing (and Where They Fail)

- Final Thoughts and What We Can Do Next

Affiliate Disclosure:

Some links in this post are affiliate links. If you choose to buy through them, we may earn a small commission — at no extra cost to you. Thanks for supporting our work and helping us keep this content free!

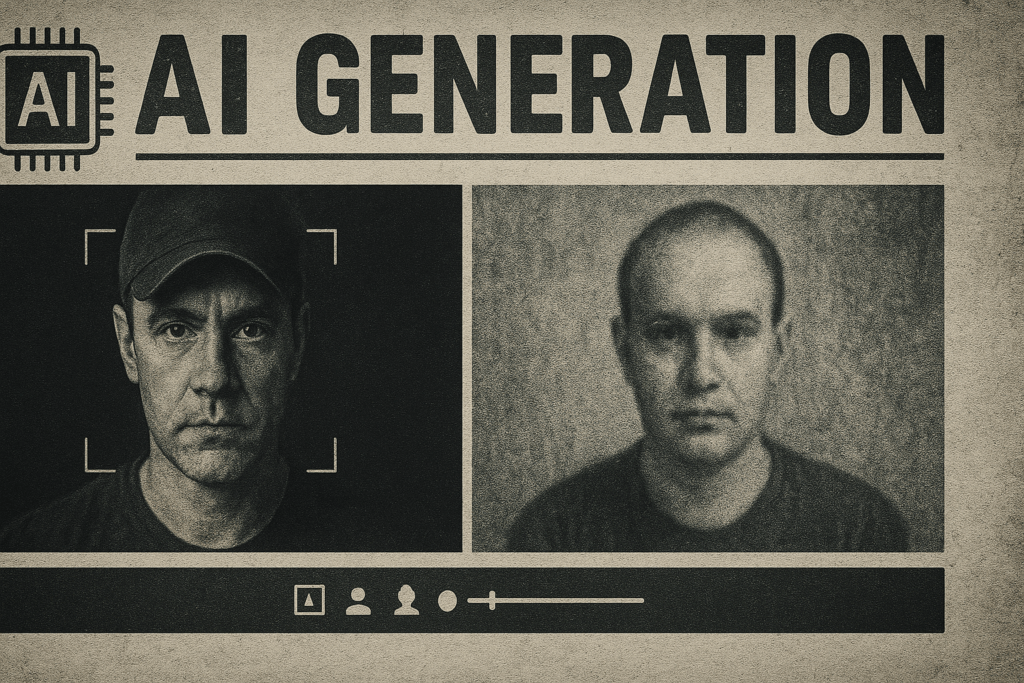

1. Deepfake Awareness in 2025 – Why It Matters More Than Ever

In 2025, it’s not just about what you see or hear — it’s about what you can trust.

Deepfake awareness 2025 isn’t a niche concern anymore. It’s a survival skill.

AI-generated deepfakes have become alarmingly realistic. We’re no longer talking about quirky filters or celebrity parodies. We’re talking about full-scale voice cloning, video manipulation, and AI-generated content being used for scams and social engineering — and it’s happening to regular people, not just politicians or CEOs.

You could receive a voicemail from your boss…

A FaceTime call from your partner…

A video that looks like you, saying things you’ve never said.

And none of it may be real.

As someone who works with AI tools daily, I’ve come to believe that the real revolution is not in automation, but in authenticity. The more seamless AI becomes, the more valuable it is to return to what’s real, imperfect, and truly human.

But here’s the problem: spotting what’s real is harder than ever.

That’s why deepfake awareness 2025 isn’t just helpful — it’s critical. We all need to sharpen our eyes and instincts, not just our tech.

If you want to understand how serious this is, check out this excellent piece from the MIT Technology Review on the dangers and evolution of deepfakes: How Can Humans detect Deepfakes

2. What Is a Deepfake (and Why It’s Evolving Fast)

A deepfake is AI-generated content — usually video or audio — designed to recreate a person’s face, voice, or movements in a way that looks and sounds real… but isn’t. As generative AI tools become widely available, deepfake awareness is no longer just a tech topic. It’s a basic digital safety skill.

What started as a niche experiment has quickly turned into a serious risk. Today, deepfake scams are commonly used for:

• Scam calls impersonating bosses, colleagues, or family members

• Social media hoaxes with fake celebrity endorsements

• Manipulated video or audio used in legal or political disputes

• Non-consensual explicit content targeting real people

Behind these attacks is generative AI. Most deepfakes are created using models such as GANs (Generative Adversarial Networks) or diffusion systems. By analyzing massive amounts of images, videos, and audio, these tools can reproduce a person’s identity with alarming accuracy. This is why AI fraud protection is becoming essential not only for companies, but for everyday users.

In short, improving deepfake awareness is now a key part of staying safe, informed, and in control online.

Why deepfakes are getting harder to detect

In the early days, learning how to detect deepfakes was relatively easy. Glitches, stiff facial expressions, or robotic audio often gave them away. In 2025, that’s no longer true.

Modern deepfakes can now include:

• Emotionally realistic voice clones with natural pauses and tone shifts

• Highly accurate facial syncing, even in poor lighting or movement

• Convincing low-resolution videos that look authentic on social feeds

What makes this more concerning is accessibility. Creating believable deepfakes no longer requires technical skills. With free apps and online tools, almost anyone can generate realistic fakes in a short time.

This evolution is also driving the rise of biometric deepfakes, where facial recognition, voice authentication, or identity checks are deliberately manipulated. That’s why deepfake awareness and basic AI fraud protection skills are now critical for anyone who uses the internet — not just cybersecurity professionals.

Did you know?

Did you know?

In 2023, Meta revealed that 99% of AI-generated political ads were flagged only after user reports. Automated systems failed to catch them in real time — highlighting how limited detection tools still are.

This challenge has only grown since then. Even in 2025, most platforms continue to rely heavily on human reporting rather than reliable automatic detection.

Raising deepfake awareness today means understanding how this technology works, recognizing warning signs, and knowing how to react when something feels off.

3. How to Spot a Deepfake (Even When It Looks Real)

Deepfakes in 2025 are designed to trick not just the eye — but the brain. That’s why relying only on automated detection tools is no longer enough. Strengthening your own deepfake awareness is one of the most effective defenses against modern deepfake scams.

Here’s what to watch for when learning how to detect deepfakes.

Red flags in videos

Red flags in videos

Unnatural blinking or facial reactions

AI-generated faces often miss realistic blinking patterns or subtle emotional micro-expressions. If something feels off, trust that instinct.

Weird lighting or inconsistent shadows

When shadows don’t match the environment or move strangely across the face, it’s often a sign of manipulation.

Blurry edges around the mouth or hairline

Deepfakes still struggle with fine details like hair, lips, and facial transitions — especially during movement.

Too-perfect facial symmetry

Real human faces are never perfectly symmetrical. An oddly flawless appearance can be a red flag.

Red flags in audio

Red flags in audio

Flat or unnatural emotional tone

Even advanced voice clones may sound robotic, monotone, or emotionally inconsistent during longer speech.

Strange pauses or clipped words

AI-generated audio can include awkward gaps, timing issues, or cut-off syllables.

Overly “clean” sound quality

Real recordings include background noise, reverb, and mic variations. Deepfake audio often feels unnaturally polished.

Always check the context

Always check the context

Reverse-search the content

Use tools like Google Lens or video verification services to see where the content appeared before. Context matters for AI fraud protection.

Verify the source

If a video or audio clip doesn’t come from an official, trusted, or verified account, approach it with caution.

Look for behavioral mismatches

Pay attention to gestures, emotions, or reactions that don’t align with what’s being said.

Use AI to fight AI

Use AI to fight AI

Ironically, some of the best deepfake awareness strategies involve AI itself. These tools can support AI fraud protection when used carefully:

Microsoft Video Authenticator

Analyzes subtle pixel changes and metadata to highlight manipulated frames.

Reality Defender

A browser-based tool that flags suspected deepfake content while browsing news or social platforms.

Sensity.ai

Used by journalists and investigators to detect manipulated media and biometric deepfakes at scale.

These tools aren’t perfect — and shouldn’t replace human judgment — but they’re improving quickly, just like the deepfakes they aim to detect.

4. How to Protect Yourself From Deepfakes (And Help Others)

Spotting a deepfake is important — but protecting yourself is even more critical. As these AI-generated fakes become more realistic, deepfake awareness 2025 also means building smarter digital habits. Here’s what that looks like today.

Think before you share

Think before you share

If a video seems shocking, emotional, or too polished, pause before you hit share.

Virality doesn’t equal truth — give yourself a few seconds to analyze it.

If something feels “off,” trust your instincts. In 2025, deepfakes are designed to bypass logic and trigger emotions quickly.

Verify the source

Verify the source

Always check whether the content is being reported by reputable news organizations or posted by verified creators.

If it only appears on anonymous accounts or obscure repost pages, treat it as suspicious.

You can also reverse-search it using tools like InVID or Google Lens.

Limit your public content

Limit your public content

Your voice, face, and online stories can all be harvested to create personalized deepfakes.

Be especially mindful on platforms that offer little control over privacy. Adjust your settings, review your tagged media, and think twice before posting personal videos — especially voice messages.

Want to explore safer AI tools? Check out our Top AI Tools for 2025 – Trusted and Transparent guide.

Talk about it with others

Talk about it with others

Most people still underestimate how real deepfakes have become.

Talking about deepfake awareness 2025 with friends, colleagues, or family members helps build a culture of caution and digital literacy.

This kind of informal education can stop fake news and scams before they spread.

Use smart tools

Use smart tools

Technology can also help defend against manipulation. Try:

Reality Defender – Browser extension that flags manipulated videos as you scroll

Microsoft Video Authenticator – AI that scans for invisible manipulation signals

Sensity.ai – Used by journalists and platforms for large-scale fake media detection

These tools are evolving quickly — and will be key in the fight against fake media.

Report what looks fake

Report what looks fake

If you come across something suspicious, report it to the platform.

Most social media sites have reporting options for misleading content. The more people report, the better detection systems become over time.

Remember, protecting yourself in 2025 doesn’t require being paranoid — just being prepared.

Deepfake awareness 2025 means using your judgment, your tools, and your voice to keep digital spaces authentic.

5. The Role of Digital Literacy

No matter how advanced technology becomes, human judgment still matters most. That’s why digital literacy is no longer optional — it’s essential. In an era shaped by AI-generated content, building strong deepfake awareness starts with how we think, not just the tools we use.

Understand how media is created

Knowing that images, voices, and videos can now be generated or altered by AI helps you question what you see online. When something feels slightly off, it often is. This mindset is the foundation of real deepfake awareness and long-term AI fraud protection.

Teach critical thinking early

Whether you’re a parent, teacher, or team leader, helping others recognize manipulation makes a real difference. Simple habits — like comparing real content with AI-generated examples — can strengthen instincts and reduce the risk of falling for deepfake scams.

Don’t just consume — analyze

Instead of scrolling passively, pause and ask: Who shared this? Why now? What’s the goal?

These small questions change how you experience online content and naturally sharpen your ability to detect deepfakes.

Rely on trustworthy sources

Stick to platforms and publications that value fact-checking and accountability. Avoid sensationalist content designed to provoke emotion without evidence. When it comes to deepfake awareness, trusted sources make all the difference.

Stay curious, not alarmed

The deepfake landscape evolves quickly, but staying informed doesn’t mean panicking. Following reliable outlets like NPR’s AI coverage or MIT Technology Review can help you stay updated with clarity and context.

6. Ethical Risks We Need to Watch

Deepfakes aren’t just a tech problem — they raise serious ethical questions that affect privacy, trust, and public safety.

Misinformation at scale

One deepfake can go viral in minutes and influence thousands before it’s even flagged. In elections, conflicts, or emergencies, that can mean real damage.

Loss of personal agency

AI can recreate your voice or face without consent. That’s more than creepy — it’s a threat to identity and personal security. People have already been targeted by deepfakes in scams and impersonation attempts.

Erosion of trust

If we start to doubt everything we see or hear, it becomes harder to trust real content. The long-term impact could weaken journalism, justice systems, and social relationships.

Bias in detection tools

Even the tech we use to fight deepfakes isn’t perfect. Some AI detectors work better on certain languages, accents, or skin tones — raising concerns about fairness and accuracy.

Who is responsible?

Should platforms act faster? Should governments regulate more? Should creators be punished? Right now, the rules are still blurry — and we need clearer accountability.

7. Real Examples You Should Know About

To understand the true impact of deepfakes, it helps to look at real situations — not just hypotheticals. These aren’t science fiction. They’ve already happened.

The Pope in a puffer jacket

A viral image showed Pope Francis in a designer white coat — but it was AI-generated. Many believed it was real, proving how easy it is to fool even the most observant viewers.

Fake calls from a CEO

In multiple corporate fraud cases, scammers used AI-generated voice calls to impersonate executives and trick employees into transferring funds. Some companies lost millions.

Political manipulation

During recent elections around the world, deepfakes have been used to fabricate speeches or alter interviews, aiming to sway public opinion or discredit opponents.

False celebrity endorsements

AI-generated ads have used deepfakes of celebrities to promote sketchy crypto schemes, supplements, and fake apps — all without their permission.

Personal revenge content

One of the darkest uses of deepfakes involves non-consensual imagery, especially targeting women. Many victims only learn about it after the content spreads.

These examples aren’t meant to scare — but to show why staying aware matters. The more we learn, the better we protect ourselves and others.

8. What Platforms Are Doing (and Where They Fail)

Social media and tech platforms are under growing pressure to fight deepfakes — but their responses vary widely in speed, transparency, and effectiveness.

Meta (Facebook, Instagram)

Meta now labels some AI-generated content and invests in detection tools. However, enforcement is inconsistent, and harmful deepfakes often spread faster than they’re removed.

TikTok

TikTok requires creators to label AI-generated content — but compliance is low, and the line between parody and manipulation isn’t always clear.

YouTube

YouTube introduced policies to remove synthetic content that misleads about elections or causes harm. Still, enforcement depends on user reporting and takes time.

X (formerly Twitter)

X has fewer content moderators than before and more lenient policies. Deepfakes can go viral quickly, especially when tied to trending political topics.

Google and Microsoft

These companies have invested in detection tools like DeepMind’s SynthID and Microsoft’s Video Authenticator. But these are not yet widely integrated into consumer-facing platforms.

The missing link

Most platforms still rely on users to report content and don’t verify uploads proactively. Worse, there’s no universal standard for labeling or removing deepfakes.

Until stronger regulations arrive, responsibility still falls largely on users — and that’s a system we all need to navigate wisely.

9. Final Thoughts and What We Can Do Next

Deepfakes aren’t going away — and neither is AI. But the way we respond to these technologies can shape their impact.

At AI Digital Space, we believe the future isn’t about rejecting tech — it’s about using it wisely. Staying informed, questioning what we see, and helping others do the same is how we protect truth without losing progress.

Personally, I think the more digital noise we face, the more valuable authenticity becomes. In the long run, what’s real — human, flawed, grounded — will stand out even more. But spotting it takes effort.

So here’s what we can do next:

Stay aware. Stay curious. And help others learn too.

AI should serve us, not confuse us — and that starts with understanding how it really works.

If you found this helpful, feel free to explore more tools and guides on our website.

We’re building a space that’s not just about following trends — but about thinking deeper.

If you want to deepen your deepfake awareness and better understand how AI-generated content can mislead, manipulate, or quietly influence decisions, these articles expand naturally on what we’ve covered here:

→ AI Voice Replication – Best Tools, Use Cases & What to Watch Out For

→ What Are AI Hallucinations? Understanding the Risks Behind Smart Answers

→ How AI Understands Visual Data – Inside the Black Box

→ Avoid These Common AI Tool Scams – What to Look For Before Signing Up

Each of these focuses on a different layer of the same challenge: recognizing manipulation early, understanding how AI-generated content is created, and learning how to respond calmly and critically.