In this guide

- Why AI Answers Change Every Time (And How to Get Stable Results)

- What We Personally Noticed Using AI Every Day

- The Real Question People Are Asking (Even If They Don’t Say It)

- The ASK–ANCHOR–LOCK Method (How to Get Stable AI Results)

- What AI Inconsistency Means for Reliability, Trust, and Real Decisions

- Final Verdict + Q&A: How to Use AI Without Losing Trust

Disclosure:

Some links in this post are affiliate links. If you click and purchase, we may earn a small commission at no extra cost to you. Thank you for supporting AIDigitalSpace.com and helping us keep this content free and useful.

Why AI Answers Change Every Time (And How to Get Stable Results)

Quick note for readers:

If you’ve ever asked the same question to an AI and received different answers, you’re not doing anything wrong. This guide isn’t about “how AI works in theory” — it’s about how to actually use AI in real life without losing trust in the answers.

If you’ve ever asked the same question to an AI twice and received two different answers, you’re not imagining things — and you’re definitely not alone.

In 2026, AI tools like ChatGPT, Gemini, and Claude are part of daily life. We use them for work emails, studying, planning trips, even making decisions. And yet, one of the most common frustrations people have is this:

“Why does AI change its answer every time I ask the same thing?”

At AIDigitalSpace, we see this question constantly — not from tech experts, but from normal people who just want clear, reliable help. And honestly? The confusion makes sense.

Here’s the important part upfront:

But it does mean you need to understand how AI actually works if you want stable results.

Let’s break it down, simply and honestly.

What We Personally Noticed Using AI Every Day

At AIDigitalSpace, we use AI tools daily — for writing, research, planning, and testing ideas.

And one thing became obvious very quickly: why AI answers change is one of the most confusing aspects for everyday users.

Sometimes the change is small.

Other times, the tone, structure, or even the conclusion is different — even when the question feels identical.

At first, this feels like a bug. But after using AI consistently, we realized something important: AI isn’t inconsistent — it’s responsive.

Understanding why AI answers change helped us see that these variations usually follow patterns tied to context, wording, and expectations.

And once you understand what AI responds to and why AI answers change, frustration turns into control.

The Real Question People Are Asking (Even If They Don’t Say It)

Most users don’t actually care about AI architecture or machine learning models.

What they’re really asking is:

“Can I trust AI answers, or not?”

And that’s a fair question — especially once you start noticing why AI answers change from one response to the next.

When AI gives different answers, the reaction is often emotional before it’s technical:

students worry about correctness

professionals worry about credibility

beginners worry they’re “using it wrong”

Here’s our honest take, based on daily use and testing:

AI is reliable for patterns, structure, ideas, and speed — but not for absolute truth.

Understanding why AI answers change helps explain this. AI outputs aren’t fixed facts stored in memory. They’re probabilistic responses generated in real time. The AI isn’t recalling “the answer” — it’s predicting the most likely useful response based on many variables.

That distinction matters — a lot — because once you understand why AI answers change, you stop expecting certainty and start using AI for what it actually does best.

Once you understand this, frustration turns into control.

This is the same reason voice assistants behave differently depending on how you phrase a request — they interpret intent rather than retrieve fixed answers (here’s a simple explanation of how voice assistants actually work).

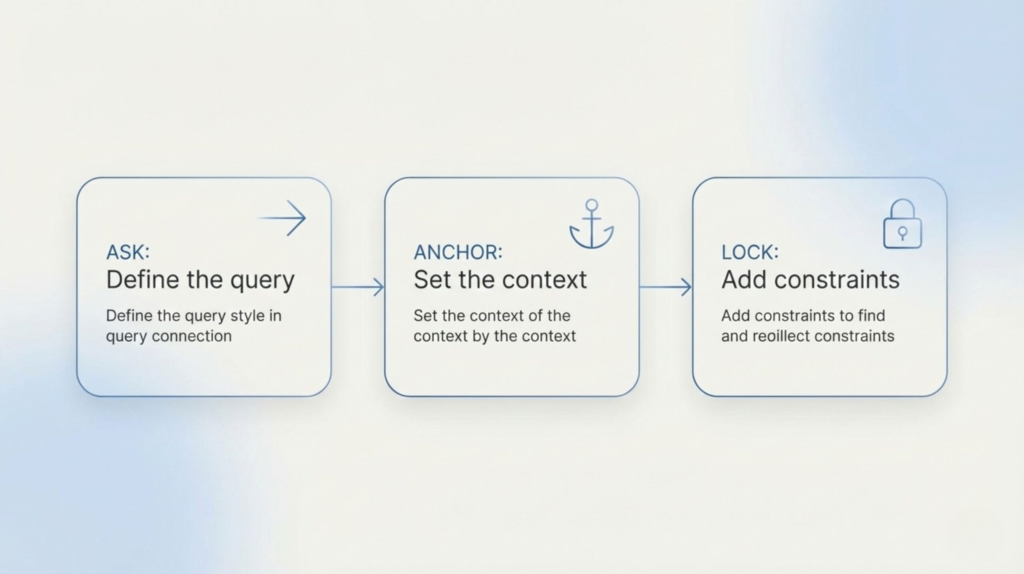

The ASK–ANCHOR–LOCK Method (How to Get Stable AI Results)

If you want more consistent AI answers in 2026, you need to stop asking casually and start asking intentionally.

This simple method exists to address why AI answers change and works across ChatGPT, Gemini, and other AI tools.

Ask

Write your question clearly, without stacking multiple requests. One goal per prompt.

When prompts are vague or overloaded, it becomes harder to control why AI answers change from one response to the next.

Anchor

Tell the AI how you want the answer. This helps reduce variation caused by interpretation.

You can anchor the response by specifying:

format

tone

depth

constraints

Example:

“Answer in bullet points, for a beginner, no assumptions.”

Anchoring matters because a lack of guidance is one of the main reasons why AI answers change even when the topic stays the same.

Lock

Add a simple stability instruction to limit unnecessary variation.

Examples:

“Use the same logic throughout.”

“Do not change assumptions.”

“Base the answer on one consistent approach.”

This step directly tackles the problem by keeping reasoning aligned across the entire response.

This method doesn’t eliminate variation completely — but it dramatically reduces it, making AI outputs far more predictable and usable.

| Common issue | Why it happens | What actually helps |

|---|---|---|

| Different answers each time | AI generates responses instead of retrieving fixed answers | Apply the Ask–Anchor–Lock method |

| Answers change mid-conversation | Previous context keeps influencing the response | Start a fresh chat for important questions |

| Conflicting advice on the same topic | No single source of truth is enforced | Ask for sources, assumptions, or side-by-side comparisons |

What AI Inconsistency Means for Reliability, Trust, and Real Decisions

At this point, it’s important to be clear about one thing: AI giving different answers is not a technical flaw — it’s a design choice.

Modern AI systems are built to be adaptive. They adjust wording, structure, and emphasis based on context, probability, and interpretation. That flexibility is what makes AI useful for brainstorming, writing, and learning. But it also introduces a limit that many people only discover through frustration.

This behavior is closely related to what are commonly called AI hallucinations — moments when an AI produces confident but incorrect information, even though the answer sounds convincing. Check our full guide on AI hallucinations.

Here’s the key takeaway we’ve learned through daily use:

AI is reliable for patterns, explanations, and structure — but not for final authority.

This concern isn’t just anecdotal — regulatory frameworks like the European Union’s Artificial Intelligence Act now include explicit requirements for transparency, risk mitigation, and accountability in AI systems, highlighting that reliability and consistency are central to how these tools should be governed (check out on EU AI Act high-level summary).

This matters most when AI is used for:

medical or health-related questions

legal or financial decisions

news interpretation

anything with real-world consequences

In these cases, the problem isn’t that AI answers change.

The problem is assuming consistency equals correctness.

In 2026, using AI responsibly means understanding that:

confident answers are not the same as verified answers

repeated answers are not guaranteed truths

clarity does not equal accuracy

This is why we always recommend treating AI as a thinking partner, not a decision-maker. When AI output really matters, the safest habit is simple: use AI to narrow options, then verify independently.

That mindset doesn’t make AI less powerful — it makes you more in control.

Final Verdict + Q&A: How to Use AI Without Losing Trust

AI answers change because AI is designed to adapt — not because it’s broken. Once you understand why AI answers change, the experience becomes far less frustrating.

In 2026, the biggest mistake people make is expecting AI to behave like a search engine or an encyclopedia. It’s neither. AI is closer to a thinking assistant that responds to how you ask, what you ask, and the context you build — which is exactly why AI answers change from one interaction to another.

When used well, AI is extremely useful:

-

for structuring ideas

-

for learning faster

-

for exploring options

-

for saving time

But when used blindly, AI can feel unreliable — especially if you don’t understand why AI answers change behind the scenes.

Our rule of thumb is simple:

use AI for thinking support, not final decisions.

If something really matters — money, health, legal choices, or important work — AI should help you understand the problem, not decide it for you. When you approach AI this way, knowing why AI answers change stops being a weakness and becomes part of its value.

FAQ

Q: Why do AI answers change even when I copy-paste the same question?

A: AI doesn’t retrieve fixed answers. It generates responses each time based on probability, context, and interpretation. Even identical prompts can produce different results.

Q: Is AI unreliable, or am I using it wrong?

A: In most cases, AI isn’t unreliable — expectations are. AI is designed to adapt, not to repeat identical outputs like a search engine.

Q: Can I force AI to give the same answer every time?

A: You can’t remove variation completely, but you can reduce it by giving clear instructions, fixing assumptions, and limiting conversation context.

Q: Which AI tools are more consistent for work or study?

A: Search-oriented AI tools are usually more stable for factual tasks, while generative assistants work better for ideas, structure, and drafting.

Want to use AI better — not just more?

At AI Digital Space, we don’t chase hype. We explain how AI actually behaves, where it helps, and where it can quietly mislead — in simple, human language.

If you want clear guides, real examples, and practical insights you can trust, you can join our free newsletter here: