Affiliate Disclaimer:

Some of the links in this post are affiliate links. This means we may earn a small commission if you click through and make a purchase, at no extra cost to you. We only recommend tools we truly believe offer value.

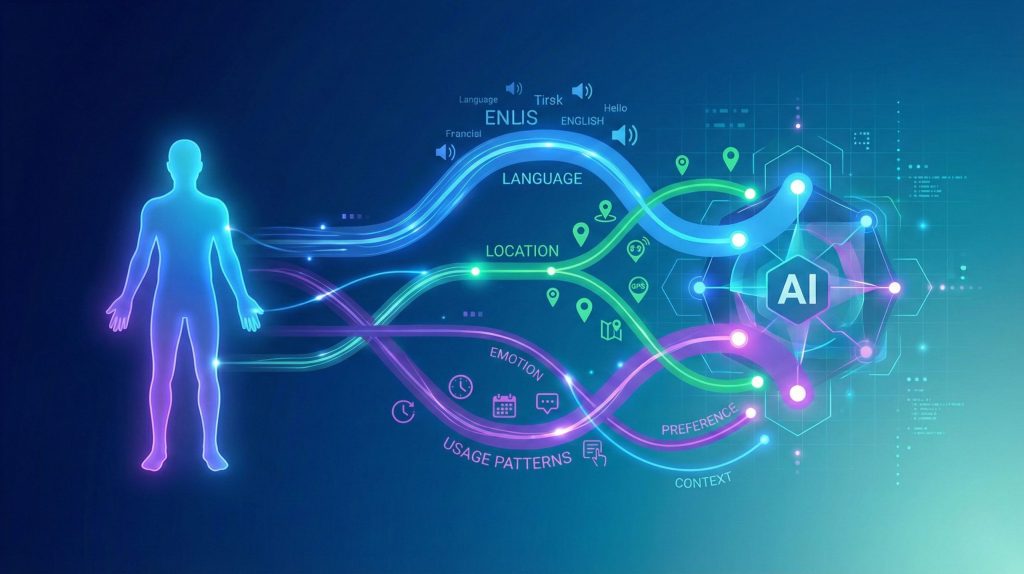

1. Why AI Tools Behave Differently for Each of Us

f you’ve ever asked the same question to an AI tool as someone else and received a completely different answer, you’re not imagining it. This happens because AI tools behave differently for each user by design.

Many of us notice it first with tools like ChatGPT, Gemini, or Claude. One person gets a detailed explanation, another gets a cautious or simplified response. It feels random — but it isn’t.

The reason AI tools behave differently is that they don’t respond in isolation. They adapt based on context.

This context can include your location, language, usage patterns, and whether you’re logged in. Over time, these signals influence how AI tools behave differently for you compared to other users.

We’ve seen this same mechanism in social platforms too. If you’ve read our guide on how AI controls what you see on TikTok and Instagram, the logic is similar: algorithms personalize experiences instead of treating everyone the same.

This isn’t necessarily good or bad on its own — but it does matter. When AI tools behave differently, they can shape what information we see, how confident an answer sounds, and how much we trust the result.

In this guide, we’ll explain what’s really happening behind the scenes, what data is involved, and how to use AI tools more consciously — without overcomplicating things or creating unnecessary fear.

For transparency, we’ll also reference official sources where relevant, including OpenAI’s public documentation on personalization and safety systems: Open AI Policies.

If you want a deeper understanding of how digital tracking works, why personalization exists, and what privacy really means in 2025, Privacy Is Power explains it clearly and without fear-mongering.

2. The Real Reason Your AI Answers Don’t Match Others

When people realize that AI tools behave differently, the first assumption is usually that something is “wrong” — with the tool, the prompt, or even the user.

In reality, the reason your AI answers don’t match someone else’s has very little to do with intelligence or skill. It comes down to how modern AI systems manage context and risk.

AI tools are designed to adapt. They don’t just process a question — they process who is asking it, how it’s asked, and under what conditions. This is why AI tools behave differently even when the input looks identical.

For example:

A logged-in user may receive more detailed responses than a guest

Certain topics trigger stricter safety filters

Regional rules can influence what the AI is allowed to say

Previous interactions can shape tone and depth

This explains why two people can share the same prompt and still walk away with different results.

We’ve seen this pattern clearly in recommendation systems too. Platforms don’t show everyone the same content — they optimize for engagement and safety.

What’s important to understand is that AI tools behave differently on purpose. The goal isn’t fairness — it’s consistency within rules, policies, and user context.

OpenAI and other providers openly state that responses may vary based on safety systems and personalization layers: Open AI Safety.

Once you understand this, the inconsistency feels less confusing — and easier to manage.

3. What AI Tools Actually Track About Users

To understand why AI tools behave differently, we need to be clear about one thing: most AI tools don’t “remember you” like a person would — but they do track signals.

These signals help systems decide how to respond, how much detail to give, and how cautious to be. This is not the same as spying or recording personal conversations — but it is enough to explain why AI tools behave differently from one user to another.

| What AI tracks | What this tells the system | Why it affects your answers |

|---|---|---|

| Account status (logged in or guest) | Whether extended features, context handling, or memory can be applied. | Logged-in users may receive deeper or more detailed responses. |

| Language and region | Which legal, cultural, and safety rules must be followed. | Answers can change depending on where you access the tool. |

| Prompt wording and structure | Your intent, confidence level, and topic sensitivity. | Vague prompts often lead to safer or more generic replies. |

| Feature usage patterns | How you usually interact with the AI tool. | Repeated workflows can subtly shape default behavior. |

| Safety-related interaction signals | How cautious or restricted responses should be. | Sensitive topics may trigger softer or filtered answers. |

A useful comparison is how recommendation engines work. They don’t need to know who you are — only how you interact. We explored this mechanism in more detail in our guide on AI behavior tracking.

4. How AI Personalization Really Works Behind the Scenes

Now that we know what signals AI tools track, the next question is obvious: how does personalization actually happen?

At a high level, AI personalization is not about “knowing who you are.” It’s about adjusting responses in real time based on probability, risk, and context.

When you ask a question, the system evaluates:

The topic you’re asking about

How similar questions are usually handled

Which safety rules apply

How confident or cautious the response should be

This is why AI tools behave differently even without storing personal memories. The model predicts the safest and most useful next response — not the same response for everyone.

Why personalization feels inconsistent

From the outside, this process can feel unpredictable.

One day an AI tool is creative and open.

Another day it feels cautious or limited.

That’s because personalization is influenced by:

Ongoing policy updates

Regional regulations

System-level safety tuning

Changes in how the model balances usefulness vs risk

We’ve seen similar behavior shifts with search engines and social platforms over the years. If you’re curious how these invisible adjustments affect what we see online, this connects closely to our article on how AI controls what you see on social feeds.

Personalization vs manipulation (important distinction)

Personalization is meant to improve relevance.

Manipulation is when systems push certain outcomes without transparency.

Most major AI providers claim to focus on the first — but the line isn’t always clear. That’s why understanding how personalization works matters.

The key takeaway

AI personalization isn’t random — and it isn’t personal in the human sense either.

It’s a dynamic system constantly adjusting to reduce risk, increase usefulness, and stay within rules. Once you understand this, the behavior of AI tools becomes easier to interpret — and less frustrating to use.

5. Common Myths, Mistakes, and Misunderstandings About AI Behavior

Once people notice that AI tools behave differently, a few assumptions tend to spread very quickly — and most of them are misleading.

Let’s clear up the most common ones.

Myth 1: “The AI remembers everything about me”

This is one of the biggest misunderstandings.

Most AI tools do not remember you as a person across sessions in a human way. They don’t build a personal profile with emotions, intentions, or long-term memory unless specific features are enabled. What they use instead are signals and context, not personal identity.

Confusing these two ideas often leads to unnecessary fear.

Myth 2: “If I get a worse answer, I did something wrong”

Not true.

When AI tools behave differently, it’s rarely about user skill. A shorter, more cautious answer often comes from topic sensitivity or safety rules, not from poor prompting.

This is why copying someone else’s prompt doesn’t always produce the same result.

Myth 3: “Using AI in private mode fixes everything”

Private or guest modes can reduce some tracking, but they don’t eliminate system-level rules. Safety filters, regional limits, and model behavior still apply.

Privacy modes change how much context is available — not how the AI fundamentally works.

Common mistakes users make

Beyond myths, there are also practical mistakes that reduce the quality of AI responses:

Asking vague questions and expecting precise answers

Treating AI like a search engine instead of a reasoning tool

Ignoring uncertainty and not asking for sources or alternatives

We’ve seen these issues come up repeatedly in our guide on how people misread AI confidence and hallucinations.

Why clearing these myths matters

When we misunderstand how AI tools behave differently, we either trust them too much — or reject them completely.

Neither extreme is helpful.

The goal is informed use: knowing what AI can do, what it can’t do, and where human judgment still matters.

6. Ethical Reflection: Should AI Treat Users Differently at All?

At this point, a fair question comes up: should AI tools behave differently for different users in the first place?

From an ethical perspective, personalization sits in a gray area. On one hand, adapting responses can make AI more useful, safer, and easier to understand. On the other, it risks creating unequal access to information without users realizing it.

When AI tools behave differently, the concern isn’t personalization itself — it’s lack of transparency.

If two people receive different answers, but don’t know why, trust starts to erode.

This is especially important when AI is used for:

Learning and education

Health or wellbeing topics

Financial or legal guidance

News and public information

In these contexts, subtle differences in tone or detail can change how people interpret reality.

We’ve explored similar concerns in our article on bias in AI training and how invisible forces shape answers.

The ethical balance that matters

Ethical AI isn’t about removing personalization entirely. It’s about balance.

That balance includes:

Clear explanations of limitations

Consistent safety standards

The ability for users to question or verify outputs

Respect for user autonomy

When users understand why AI tools behave differently, personalization becomes a feature — not a manipulation.

Our position at AIDigitalSpace

We believe AI should:

Adapt to users without misleading them

Protect people without over-filtering reality

Be powerful, but explainable

That’s why awareness matters more than fear. The more we understand how AI works behind the scenes, the better choices we can make — as users, creators, and readers.

7. Final Insights: How to Regain Control When Using AI Tools

Understanding why AI tools behave differently is useful — but what really matters is how we apply that knowledge in daily use.

| What to do | Why it helps | How to apply it today |

|---|---|---|

| Be explicit with prompts | Clear intent reduces over-filtering and vague answers. | State your goal, context, and ask for reasoning or alternatives. |

| Compare answers when accuracy matters | Different tools reveal different blind spots. | Test key questions across two AI tools or modes. |

| Review privacy and personalization settings | Hidden defaults influence how AI tools behave differently. | Check history, memory, and permissions regularly. |

| Question confident answers | AI can sound certain even when it’s wrong. | Ask for sources, uncertainty, or a second explanation. |

| Choose transparent AI tools | Explainability builds long-term trust. | Prefer tools that document limits and data use clearly. |

For a practical walkthrough on permissions and controls, we’ve created a clear step-by-step guide here:

And if you want to understand why confident AI answers can still be misleading, this deep dive helps put things into perspective:

8. FAQ: AI Personalization, Tracking, and User Control

Q: Why do AI tools behave differently for different users?

A: AI tools behave differently because they adapt responses based on context, safety rules, and usage signals rather than giving identical answers to everyone.

Q: Are AI tools tracking personal information about me?

A: Most AI tools do not track personal identity, but they do collect interaction signals such as language, region, and prompt style to manage responses safely.

Q: Does using private or guest mode stop AI personalization?

A: Private or guest modes can reduce stored context, but they do not disable system-level rules or safety filters that affect how answers are generated.

Q: Why does the same prompt give different answers on different days?

A: Changes in policies, model updates, and safety tuning can influence how AI tools behave differently even when the prompt stays the same.

Q: Can I fully control how AI tools personalize responses?

A: You can’t control everything, but you can influence results by adjusting prompts, reviewing settings, and comparing outputs across tools.

If you’d like more practical, easy-to-understand guides on AI, privacy, and the tools we actually use, you can join our newsletter — we share only what’s genuinely useful.